The clues and facts add up. Let’s sit and think for a minute:

In what rational universe could someone simply issue electronic scrip — or just announce that they intend to — and create, out of the blue, billions of dollars of value?

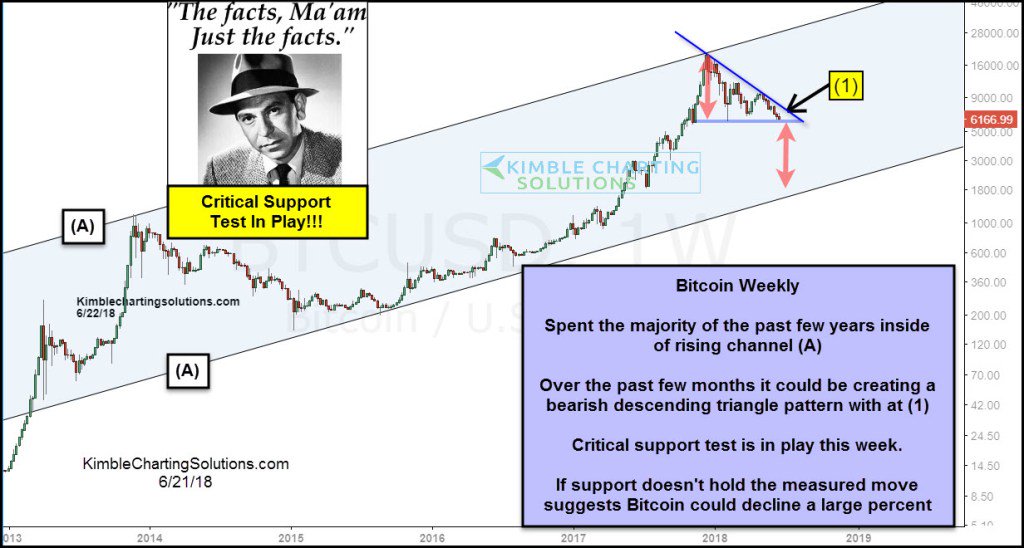

Bitcoin tangent

Did you guys notice something really interesting? The financial guys that really love bitcoin are some of the guys that either blew up or closed funds due to poor performance. The two most prominent fund manager bitcoin boosters are like that. It almost feels like they are so happy to have found their Hail Mary pass. And the most prominent guys that have good performance and didn’t blow up tend to be the guys that don’t like bitcoin and think it’s stupid, a bubble or whatever.

Think about that for a second. Oh, and that former hedge fund guy, after bitcoin plunged put his new bitcoin hedge fund on hold (buying high and selling low?). Now wonder he didn’t do well with his hedge fund; if you’re going to be making decisions based on short term volatility like that, you are bound to get whipsawed and lose money.

This is interesting because we can never really understand and know everything. But it is useful to know who you can listen to and who you should ignore. Sometimes, this saves a lot of time! From http://brooklyninvestor.blogspot.com/

—

Monday, April 30, 2018

Warren Buffett: Bitcoin is Gambling Not Investing

In an exclusive interview with Yahoo Finance in Omaha, Neb., leading up to Berkshire Hathaway’s annual shareholder meeting, which will be held om May 5, Buffett laid out his latest thinking on cryptocurrency investing. He nailed it.

“There’s two kinds of items that people buy

and think they’re investing,” he says. “One really is investing and the other isn’t.” Bitcoin, he says, isn’t.

“If you buy something like a farm, an apartment house, or an interest in a business… You can do that on a private basis… And it’s a perfectly satisfactory investment. You look at the investment itself to deliver the return to you. Now, if you buy something like bitcoin or some cryptocurrency, you don’t really have anything that has produced anything. You’re just hoping the next guy pays more.”

When you buy cryptocurrency, Buffett continues, “You aren’t investing when you do that. You’re speculating. There’s nothing wrong with it. If you wanna gamble somebody else will come along and pay more money tomorrow, that’s one kind of game. That is not investing.”

Buffett’s point is that the assets he lists such as a farm, an apartment house, etc., generate income. Bitcoin does not.

I would add there is another type of asset people hold and that is money. As Ludwig von Mises taught us, money is the most liquid good and people hold because of this liquidity. They know they can instantly exchange it, at a fairly stable price, nearly anywhere for goods and services.

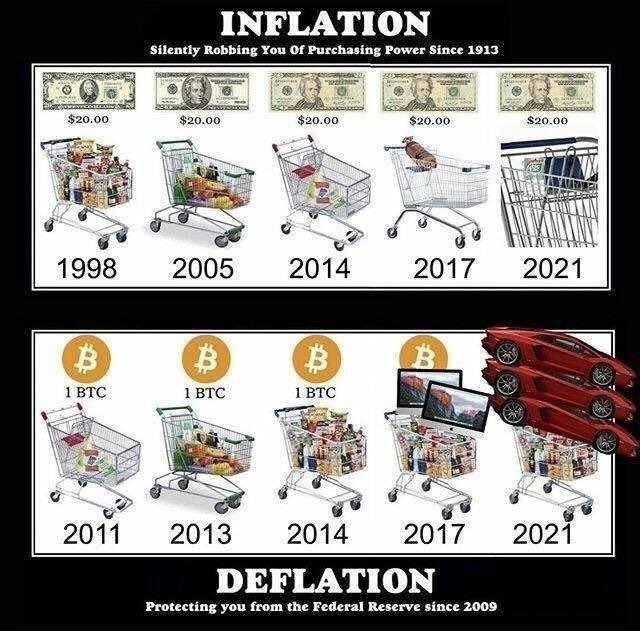

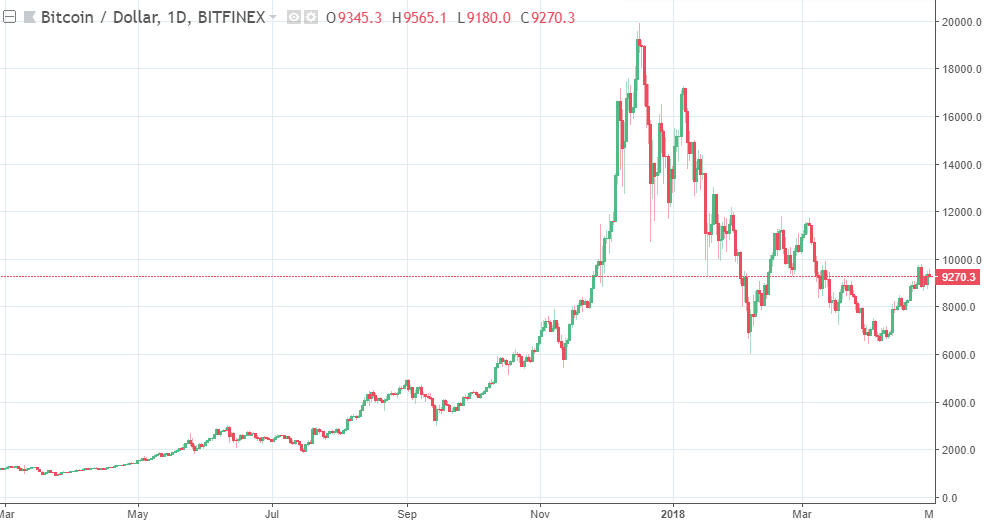

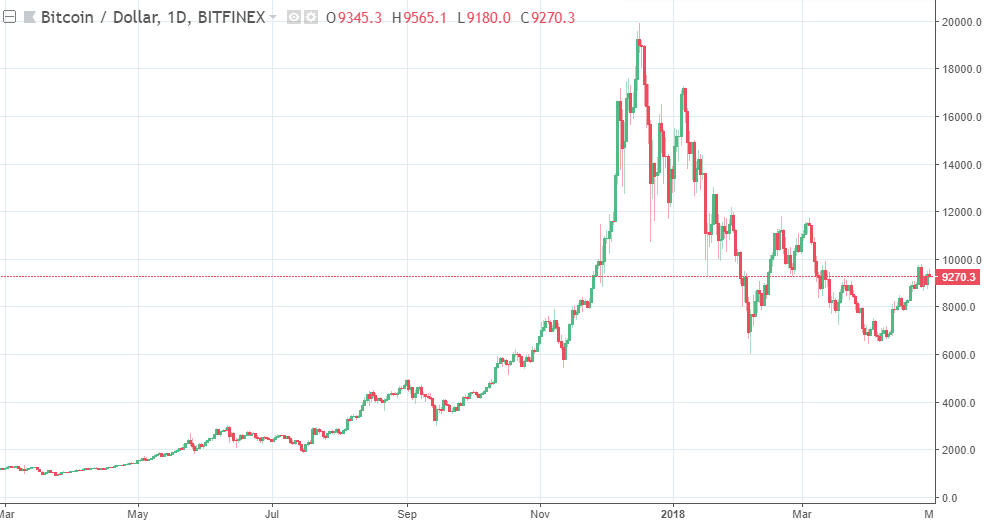

This is where Bitcoin and other cryptocurrencies fail in the money category. They are from an instrument at present that can be exchanged for any good or service and they are far from stable in price. Many people who have purchased Bitcoin over the last 6 months have lost as much as 50% of their purchasing power. That is not a stable asset, not even when compared to the U.S. dollar which is run by the Federal Reserve in crony reckless fashion.

Moreover, the idea of a world where a cryptocurrency is the world’s medium of exchange is a frightening notion. It is quite simply a remarkable way for government to track all transactions and prohibit transactions in specific books and other goods that it doesn’t want individuals to buy.

The idea that the government can’t track Bitcoin is a delusion view held by Bitcoin fanboys.

The Intercept recently reported:

Classified documents provided by whistleblower Edward Snowden show that the National Security Agency indeed worked urgently to target bitcoin users around the world — and wielded at least one mysterious source of information to “help track down senders and receivers of Bitcoins,” according to a top-secret passage in an internal NSA report dating to March 2013. The data source appears to have leveraged the NSA’s ability to harvest and analyze raw, global internet traffic while also exploiting an unnamed software program that purported to offer anonymity to users, according to other documents.

Although the agency was interested in surveilling some competing cryptocurrencies, “Bitcoin is #1 priority,” a March 15, 2013 internal NSA report stated.

-Robert Wenzel

—

What is money Bastiat? If you understand money, then the Bitcoin Scam becomes obvious.

—

Bitcoin is the greatest scam in history

It’s a colossal pump-and-dump scheme, the likes of which the world has never seen.

By Bill Harris Apr 24, 2018

Okay, I’ll say it: Bitcoin is a scam.

In my opinion, it’s a colossal pump-and-dump scheme, the likes of which the world has never seen. In a pump-and-dump game, promoters “pump” up the price of a security creating a speculative frenzy, then “dump” some of their holdings at artificially high prices. And some cryptocurrencies are pure frauds. Ernst & Young estimates that 10 percent of the money raised for initial coin offerings has been stolen.

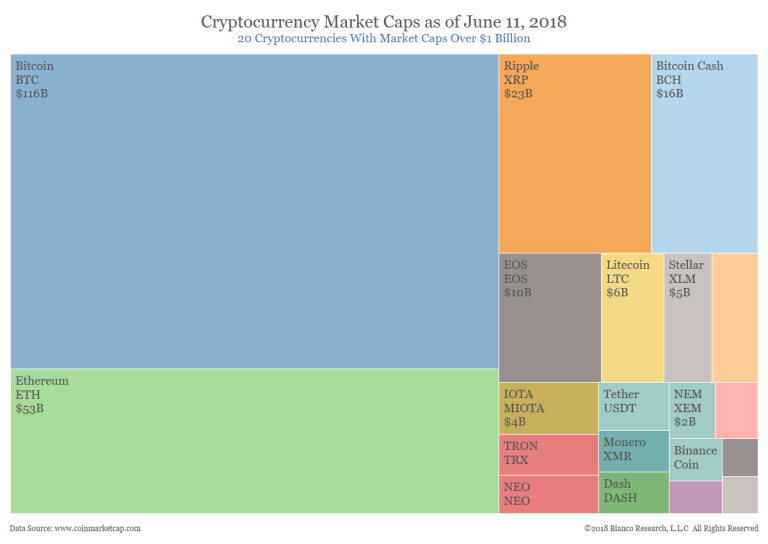

The losers are ill-informed buyers caught up in the spiral of greed. The result is a massive transfer of wealth from ordinary families to internet promoters. And “massive” is a massive understatement — 1,500 different cryptocurrencies now register over $300 billion of “value.”

It helps to understand that a bitcoin has no value at all.

Promoters claim cryptocurrency is valuable as

(1) a means of payment

Bitcoins are accepted almost nowhere, and some cryptocurrencies nowhere at all. Even where accepted, a currency whose value can swing 10 percent or more in a single day is useless as a means of payment.

2. Store of Value.

Extreme price volatility also makes bitcoin undesirable as a store of value. And the storehouses — the cryptocurrency trading exchanges — are far less reliable and trustworthy than ordinary banks and brokers.

3. Thing in Itself.

A bitcoin has no intrinsic value. It only has value if people think other people will buy it for a higher price — the Greater Fool theory.

Some cryptocurrencies, like Sweatcoin, which is redeemable for workout gear, are the equivalent of online coupons or frequent flier points — a purpose better served by simple promo codes than complex encryption. Indeed, for the vast majority of uses, bitcoin has no role. Dollars, pounds, euros, yen and renminbi are better means of payment, stores of value and things in themselves.

Cryptocurrency is best-suited for one use: Criminal activity. Because transactions can be anonymous — law enforcement cannot easily trace who buys and sells — its use is dominated by illegal endeavors. Most heavy users of bitcoin are criminals, such as Silk Road and WannaCry ransomware. Too many bitcoin exchanges have experienced spectacular heists, such as NiceHash and Coincheck, or outright fraud, such as Mt. Gox and Bitfunder. Way too many Initial Coin Offerings are scams — 418 of the 902 ICOs in 2017 have already failed.

Hackers are getting into the act. It’s estimated that 90 percent of all remote hacking is now focused on bitcoin theft by commandeering other people’s computers to mine coins.

Even ordinary buyers are flouting the law. Tax law requires that every sale of cryptocurrency be recorded as a capital gain or loss and, of course, most bitcoin sellers fail to do so. The IRS recently ordered one major exchange to produce records of every significant transaction.

And yet, a prominent Silicon Valley promoter of bitcoin proclaims that “Bitcoin is going to transform society … Bitcoin’s been very resilient. It stayed alive during a very difficult time when there was the Silk Road mess, when Mt. Gox stole all that Bitcoin …” He argues the criminal activity shows that bitcoin is strong. I’d say it shows that bitcoin is used for criminal activity.

In what rational universe could someone simply issue electronic scrip — or just announce that they intend to — and create, out of the blue, billions of dollars of value?

Bitcoin transactions are sometimes promoted as instant and nearly free, but they’re often relatively slow and expensive. It takes about an hour for a bitcoin transaction to be confirmed, and the bitcoin system is limited to five transactions per second. MasterCard can process 38,000 per second. Transferring $100 from one person to another costs about $6 using a cryptocurrency exchange, and well less than $1 using an electronic check.

Bitcoin is absurdly wasteful of natural resources. Because it is so compute-intensive, it takes as much electricity to create a single bitcoin — a process called “mining” — as it does to power an average American household for two years. If bitcoin were used for a large portion of the world’s commerce (which won’t happen), it would consume a very large portion of the world’s electricity, diverting scarce power from useful purposes.

In what rational universe could someone simply issue electronic scrip — or just announce that they intend to — and create, out of the blue, billions of dollars of value? It makes no sense.

All of this would be a comic sideshow if innocent people weren’t at risk. But ordinary people are investing some of their life savings in cryptocurrency. One stock brokerage is encouraging its customers to purchase bitcoin for their retirement accounts!

It’s the job of the SEC and other regulators to protect ordinary investors from misleading and fraudulent schemes. It’s time we gave them the legislative authority to do their job.

William H. Harris Jr. is the founder of Personal Capital Corporation, a digital wealth management firm that provides personal financial software and investment services, where he sits on the board of directors.

Read full article here: https://www.recode.net/2018/4/24/17275202/bitcoin-scam-cryptocurrency-mining-pump-dump-fraud-ico-value

COUNTER-ARGUMENT: https://www.forbes.com/sites/ktorpey/2018/04/24/founding-paypal-ceo-bill-harris-says-bitcoin-is-a-scam-heres-why-hes-wrong/2/#2d9379a166b9

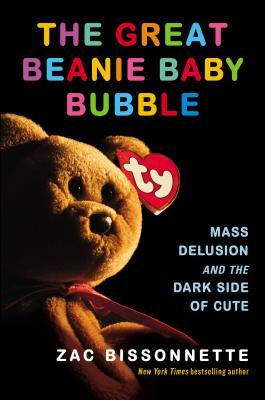

Where have we seen this type of behavior before?

—

—

UPDATE: Friday April 27th 2018

Read: http://thecharlieton.com/whitney-tilson-why-the-hell-didnt-i-listen-to-charlie-munger/

Lesson be humble about what you attempt.

Below is an email from Whitney Tilson from Kase Learning announcing his:

Program Guide-Kase Learning Short Selling Conference-May 3,__ 2018

Attached is the program guide, which includes an agenda for the day and bios of all of the speakers. Registration and continental breakfast begin at 7:15am, the first speaker is at 8:15am, there are morning, lunch and afternoon breaks, and the last speaker ends at 4:15pm, followed by a networking cocktail reception until 7:00pm. The NYAC is on the corner of Central Park South and Seventh Avenue, and it has a dress code – no jeans, shorts, sneakers or t-shirts.

This full-day event is the first of its kind dedicated solely to short selling and will feature 22 of the world’s top practitioners who will share their wisdom, lessons learned, and best, actionable short ideas. I’ve seen many of the speakers’ presentations and they’re awesome! Companies that will be pitched include Tesla, Disney, Kraft-Heinz and Stericycle, plus internet ad fraud and gold.

The idea for the conference is rooted in the fact that this long bull market has inflicted absolute carnage on short sellers, and even seasoned veterans are throwing in the towel. This capitulation, however, combined with the increasing level of overvaluation, complacency, hype and even fraud in our markets, spells opportunity for courageous investors, so there is no better time for this conference.

Reporters from all of the major media outlets will be there, and CNBC is covering it as well. I was on their Halftime Report yesterday discussing the conference: www.cnbc.com/video/2018/04/26/kases-whitney-tilson-talks-the-art-and-pain-of-short-selling.html. I also just published the fourth, final (and my favorite) article in a series I’ve written entitled Lessons from 15 Years of Short Selling: https://seekingalpha.com/article/4166837-lessons-15-years-short-selling-veterans-advice

I’d be grateful if you’d help spread the word about the conference among your friends and colleagues, and wanted to pass along a special offer: when they register at http://bit.ly/Shortconf, they can use my friends and family discount code, FF20, to save 20% ($600) off the current rate.

I look forward to seeing you next week!

Sincerely yours,

Whitney Tilson

Founder & CEO

Kase Learning, LLC

5 W. 86th St., #5E

New York, NY 10024

(646) 258-0687

WTilson@KaseLearning.com